Nvidia has executed its largest strategic investment to date, agreeing to a massive asset and licensing deal with AI chip startup Groq to fortify its position in the rapidly growing inference market.

The Deal:

- The Price Tag: A $20 billion cash transaction centered on Groq’s core inference assets.

- The Structure: Nvidia secures a non-exclusive license to Groq’s low-latency technology and is hiring Groq’s founder and key engineering leaders.

- The Independence: Groq will continue to operate as an independent company under new leadership, keeping its GroqCloud platform outside the scope of the transaction.

The Details:

This move allows Nvidia to integrate Groq’s specialized “Language Processing Unit” (LPU) architecture—famous for its extreme speed—directly into its own AI factory platform. The acquisition follows a recent industry pattern of “talent-and-IP” deals, allowing Nvidia to absorb critical technology and leadership without a full corporate takeover. The focus is squarely on expanding Nvidia’s capabilities in real-time workloads, ensuring their hardware remains the default choice as AI models move from development labs into daily production.

Why It Matters:

The center of gravity in AI spending is shifting from training (making models smart) to inference (using models in real-time). In the inference war, latency is the enemy. By acquiring Groq’s designs, which are purpose-built for instant response times, Nvidia is aggressively securing the infrastructure for the next generation of AI agents, voice assistants, and live search tools. For the industry, this signals tightening consolidation; as Nvidia bakes faster inference options directly into its ecosystem, the survival space for independent hardware challengers shrinks significantly.

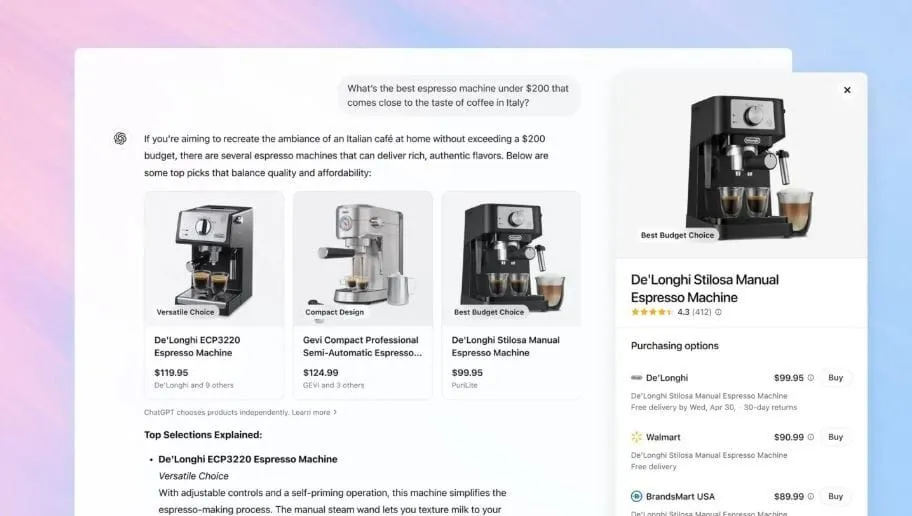

OpenAI Explores “Intent-Based” Ads to Monetize ChatGPT’s Free Tier

OpenAI is actively exploring the integration of advertising into ChatGPT, testing a model where ads appear as organic recommendations within conversations rather than traditional display banners.

The Strategy:

- The Format: The company is testing “intent-based” ads that are woven naturally into the dialogue, triggering only when relevant to the user’s specific question.

- The Objective: The primary goal is to monetize the massive free user base to help offset the soaring infrastructure costs required for model training and inference, while keeping subscriptions as a secondary revenue stream.

- The Risk: Trust is the central variable; the company risks alienating users if the AI’s advice feels biased, commercially motivated, or indistinguishable from paid promotion.

The Details:

Instead of cluttering the interface with banners, OpenAI is considering models including generative ads, affiliate-style revenue, and sponsored GPTs. This approach builds on the recently rolled-out “shopping research” features. Internally, the concept focuses on spotting user intent—such as asking for product recommendations—and surfacing specific goods or services in the response. While leadership has discussed delaying ad work during recent internal “code red” periods to focus on product performance, reports confirm that testing of shopping-related ad formats is underway.

Why It Matters:

This marks a major evolution in the digital ad economy, shifting power from search keywords to conversational intent. An AI model that can identify what you want, write a persuasive pitch, and facilitate the purchase creates a high-conversion funnel that poses a direct threat to the traditional search advertising model (like Google’s). For users, this introduces a new layer of complexity: the necessity for rigorous labeling and transparency. Without clear distinctions, users may struggle to discern whether they are receiving unbiased assistance or a paid placement.

Salesforce Scales Back Generative AI Ambitions Amid Reliability Concerns

Salesforce is pivoting its artificial intelligence strategy, admitting that large language models (LLMs) have proven too unpredictable for critical business functions, and is shifting its focus back toward structured automation.

The Numbers:

- The Shift: The company is moving its “Agentforce” platform away from open-ended generative AI toward “deterministic triggers” and rule-based automation to ensure consistency.

- The Cost: This strategic adjustment follows significant workforce reductions, with approximately 4,000 support roles cut as the company bet heavily on AI agents.

- The Issue: Internal confidence in LLMs has dropped due to “task drift”—the tendency for AI to ignore instructions or fail when prompts become too complex.

The Details:

After an aggressive push into generative AI, Salesforce executives are acknowledging the limitations of current models in real-world enterprise environments. The primary complaints revolve around reliability; models often miss specific instructions or veer off-script during complex interactions. Consequently, the company is prioritizing strong data foundations and controlled, predictable workflows over the initial “AI-first” hype. While Agentforce remains a key revenue bet, the marketing and functionality are pivoting toward safety and predictability.

Why It Matters:

This is a significant “reality check” for the enterprise AI sector. Salesforce is effectively saying the quiet part out loud: LLMs are great at talking, but shaky at doing. The move suggests that the immediate future of business tech isn’t unfettered AI improvisation, but “guided determinism.” Enterprises are signaling that they value auditable, consistent outcomes over creative flair, pushing the market toward a hybrid model where AI handles the interface, but rigid code handles the actual work.

Disclaimer: All images and videos used are for educational purposes only. We do not claim ownership of this content; all rights and credits belong to their respective owners.