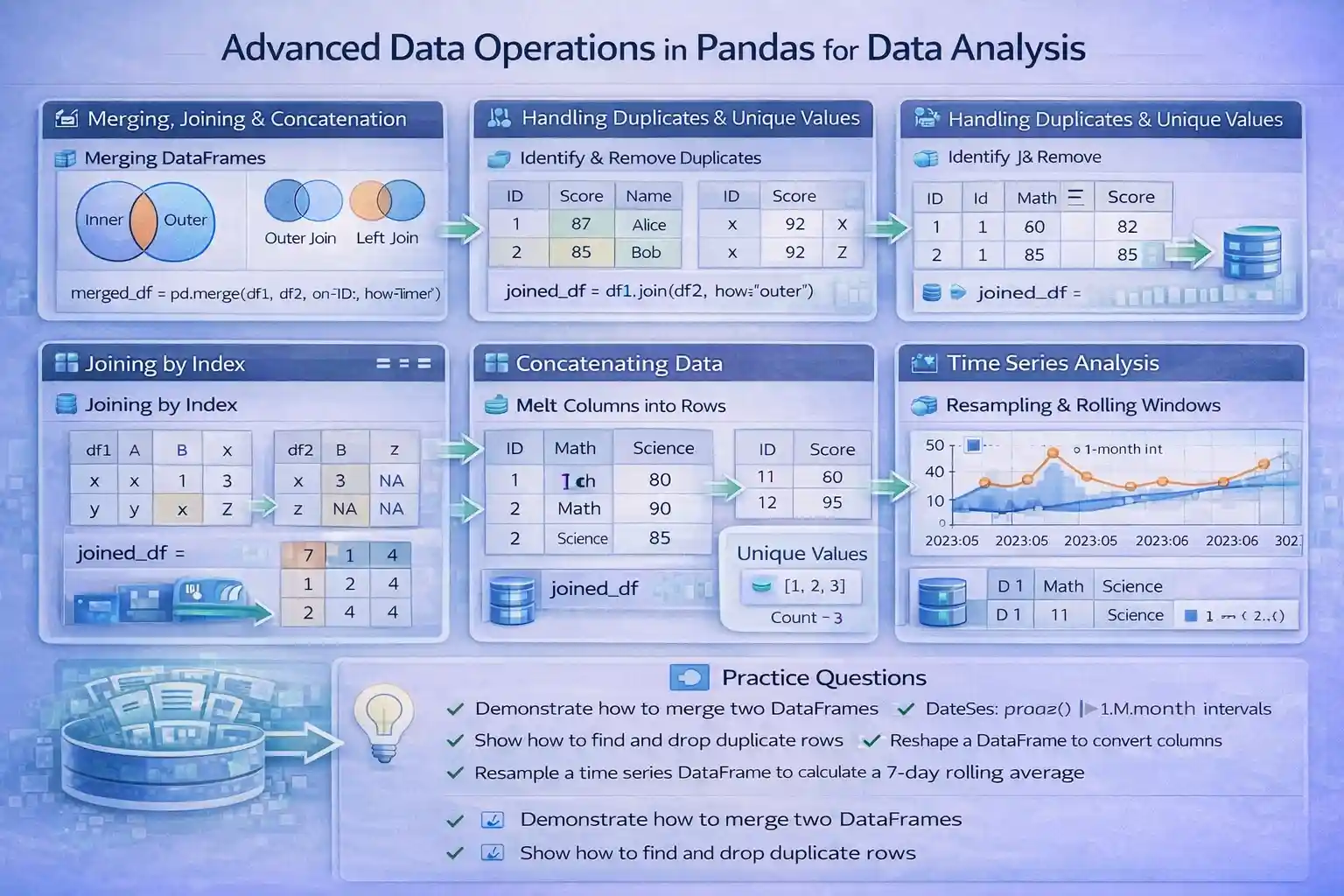

This study material provides a comprehensive overview of advanced data operations in pandas, focusing on techniques such as DataFrame merging, joining, concatenating, handling duplicates and unique values, reshaping data, and time series analysis. These core concepts are essential for efficient data manipulation, cleaning, and preparation in data analysis workflows, especially when managing large or complex datasets.

1. Merging, Joining, and Concatenating DataFrames

DataFrame Merging Techniques

Merging DataFrames in pandas allows combining datasets based on common keys or columns, emulating SQL-style join operations. The merge() function is highly versatile, supporting different types of joins:

- Inner Join: Returns rows with matching keys in both DataFrames.

- Outer Join: Returns all rows from both DataFrames, filling missing matches with NaN.

- Left Join: Returns all rows from the left DataFrame and matching rows from the right.

- Right Join: Returns all rows from the right DataFrame with matching entries from the left.

Example:

import pandas as pd

df1 = pd.DataFrame({'ID': [1, 2, 3], 'Score': [87, 92, 85]})

df2 = pd.DataFrame({'ID': [2, 3, 4], 'Name': ['Alice', 'Bob', 'Charlie']})

merged_inner = pd.merge(df1, df2, on='ID', how='inner')

# Result: Rows with ID 2 and 3 only

This method is particularly useful when integrating datasets from different sources like sales and customer databases, enabling comprehensive analysis.

Efficient DataFrame Joining

The join() method simplifies merging DataFrames based on indices or keys. It is especially useful when DataFrames have a hierarchical index or a common index.

Example:

df1 = pd.DataFrame({'A': [1, 2]}, index=['x', 'y'])

df2 = pd.DataFrame({'B': [3, 4]}, index=['x', 'z'])

joined_df = df1.join(df2, how='outer')

# Combines DataFrames based on index with outer join

This approach improves data relation handling during preprocessing, supporting quick data relinking.

Concatenating DataFrames for Big Data

concat() allows stacking DataFrames vertically (rows) or horizontally (columns), facilitating scalable data operations.

Example:

df1 = pd.DataFrame({'A': [1, 2]})

df2 = pd.DataFrame({'A': [3, 4]})

concatenated = pd.concat([df1, df2], ignore_index=True)

# Results in a single DataFrame with 4 rows

This function is vital for assembling large datasets, such as concatenating logs, transactions, or sensor readings.

2. Handling Duplicates and Unique Values in Datasets

Identifying and Removing Duplicate Rows

Duplicates can distort data analysis correctness. Pandas provides duplicated() to flag duplicates and drop_duplicates() to eliminate them.

Example:

data = pd.DataFrame({'ID': [1, 2, 2, 3], 'Value': [10, 20, 20, 30]})

duplicates = data.duplicated()

clean_data = data.drop_duplicates()

Removing duplicates ensures data integrity, particularly in large datasets collected from multiple sources.

Extracting Unique Values for Categorical Features

Unique values help identify distinct categories or classes within a feature, aiding in feature engineering and exploratory data analysis.

Methods:

unique()returns an array of unique values in a column.nunique()returns the count of distinct values.

Example:

categories = data['ID'].unique()

count_categories = data['ID'].nunique()

This simplifies understanding of categorical feature distributions, essential for models that require categorical encoding.

Optimizing Data Cleaning with Conditional Duplicate Handling

Selective duplicate dropping based on subset columns allows data to be curated precisely:

clean_subset = data.drop_duplicates(subset=['ID'])

This facilitates accurate data cleaning tailored to analytical requirements.

3. Reshaping Data with melt() and stack()/unstack() Methods

DataFrame Reshaping Using melt()

melt() transforms wide-format data into long-format, which simplifies aggregation and visualization.

Example:

df = pd.DataFrame({'ID': [1, 2], 'Math': [80, 90], 'Science': [85, 95]})

melted = pd.melt(df, id_vars=['ID'], value_vars=['Math', 'Science'], var_name='Subject', value_name='Score')

This transformation is crucial for preparing datasets for visualization tools and grouped analyses.

Stacking and Unstacking MultiIndex DataFrames

stack() pivots columns into rows, while unstack() reverses this process, enabling multi-dimensional data analysis.

Example:

multi_index_df = df.set_index(['ID'])

stacked = multi_index_df.stack()

unstacked = stacked.unstack()

These operations allow analysts to manage hierarchical data efficiently, supporting complex pivot tables and reports.

Practical Applications

Reshaping techniques underpin advanced analytics scenarios like pivot operations, normalization, and feature engineering for machine learning models.

4. Time Series Data Analysis and DateTime Indexing in Pandas

DateTime Data Parsing and Indexing

Converting date features into datetime objects enables pandas to perform time-aware operations such as slicing and resampling.

Example:

df['Date'] = pd.to_datetime(df['Date'])

df.set_index('Date', inplace=True)

This provides the foundation for time series analysis, such as trend detection and seasonality studies.

Resampling and Frequency Conversion

resample() aggregates data over specified time intervals, key to trend and seasonal component analysis.

Example:

monthly_data = df.resample('M').mean()

Analyzing data at different temporal granularities helps identify long-term patterns and anomalies.

Handling Missing Dates and Time Series Alignment

Addressing irregularities involves filling missing values using forward/backward fill techniques:

df = df.asfreq('D').fillna(method='ffill')

Proper alignment ensures accurate temporal comparisons across multiple datasets, vital in forecasting.

Rolling Window Calculations for Time Series Smoothing

Rolling statistics such as rolling().mean() smooth out short-term fluctuations, enhancing trend visibility.

Example:

df['RollingAvg'] = df['Value'].rolling(window=7).mean()

This is critical in applications like financial data analysis, sensor data smoothing, and predictive modeling.

Practice Questions

- Given two DataFrames with customer IDs and transaction amounts, demonstrate how to perform an inner merge based on customer ID.

- Write code to remove duplicate rows from a dataset but retain only the latest entry for each ID.

- Transform a wide dataset with yearly sales figures into a long format suitable for trend analysis using

melt(). - Using a time series DataFrame with daily data, resample it to obtain monthly averages.

- Create a DataFrame with missing dates and fill these gaps with forward fill method.

- Explain the difference between

stack()andunstack()with a code example. - How would you identify the number of unique categories in a product type column?

- Demonstrate concatenating three DataFrames vertically into one.

- After merging datasets, how can you handle missing values that result from non-matching keys?

- Discuss the importance of using

pd.to_datetime()in time series data analysis.

Sample Outputs:

1. Merged dataset with customer IDs and transaction values.

2. DataFrame with duplicate rows dropped based on 'ID', keeping only latest entries.

3. Long-format DataFrame showing each year's sales per product.

4. Monthly average sales calculated from daily data.

5. DataFrame with missing dates filled with previous available values.

6. `stack()` pivots columns into rows; `unstack()` pivots rows into columns.

7. The `nunique()` method provides the count of distinct categories.

8. Concatenated DataFrame with combined rows from three datasets.

9. Fill missing values with `fillna()` method to maintain data completeness.

10. Ensures proper date parsing and time-based operation compatibility.

Resources for Further Learning

- Pandas Official Documentation

- W3Schools pandas Tutorial

- GeeksforGeeks pandas Articles

- Real Python pandas Tutorials

- Kaggle pandas Exercises

This educational guide offers a foundational understanding paired with practical insights into advanced data operations in pandas, emphasizing the importance of data merging, deduplication, reshaping, and time series analysis for robust data analysis workflows.

More Courses

- Advanced Data Analytics with Gen AI

- Data Science & AI Course

- Advanced Certificate in Python Development & Generative AI

- Advance Python Programming with Gen AI