1. Introduction to Conversational AI

Definition and Significance

Conversational Artificial Intelligence (AI) refers to systems designed to interact with humans using natural language — either through text or speech. It aims to enable machines to understand, process, and generate human language, transforming how humans communicate with computers. Such systems enhance user engagement, automate support, and provide intelligent, human-like interactions.

Key Components of Conversational AI

- Natural Language Processing (NLP): The core technology that interprets human language, analyzing syntax and semantics. For example, parsing a user query like “What’s the weather today?” to understand intent.

- Machine Learning (ML): Algorithms that improve language understanding and response quality through exposure to data. For instance, a chatbot learns to recognize new entities over time.

- Speech Recognition: Converts spoken words into digital text, enabling voice-based commands. For example, Alexa hearing “Play jazz music.”

- Text-to-Speech (TTS): Transforms generated text responses into human-like speech, such as Siri vocalizing a weather forecast.

Real-World Applications

Popular virtual assistants like Siri, Alexa, and Google Assistant demonstrate conversational AI in action, performing tasks from setting alarms to controlling smart homes. Customer support chatbots automate FAQs, reducing workload on human agents and providing 24/7 assistance.

Dialog Management & Context Tracking

Effective conversational systems track contextual information to maintain coherent multi-turn conversations. For example, after asking “What’s my schedule today?” the system remembers previous intents for follow-up questions.

Resources for Beginners

- Coursera: “Introduction to NLP,” “AI for Everyone”

- Udemy: Beginner tutorials on chatbot development

- freeCodeCamp: Foundational tutorials on conversational AI and Python implementations

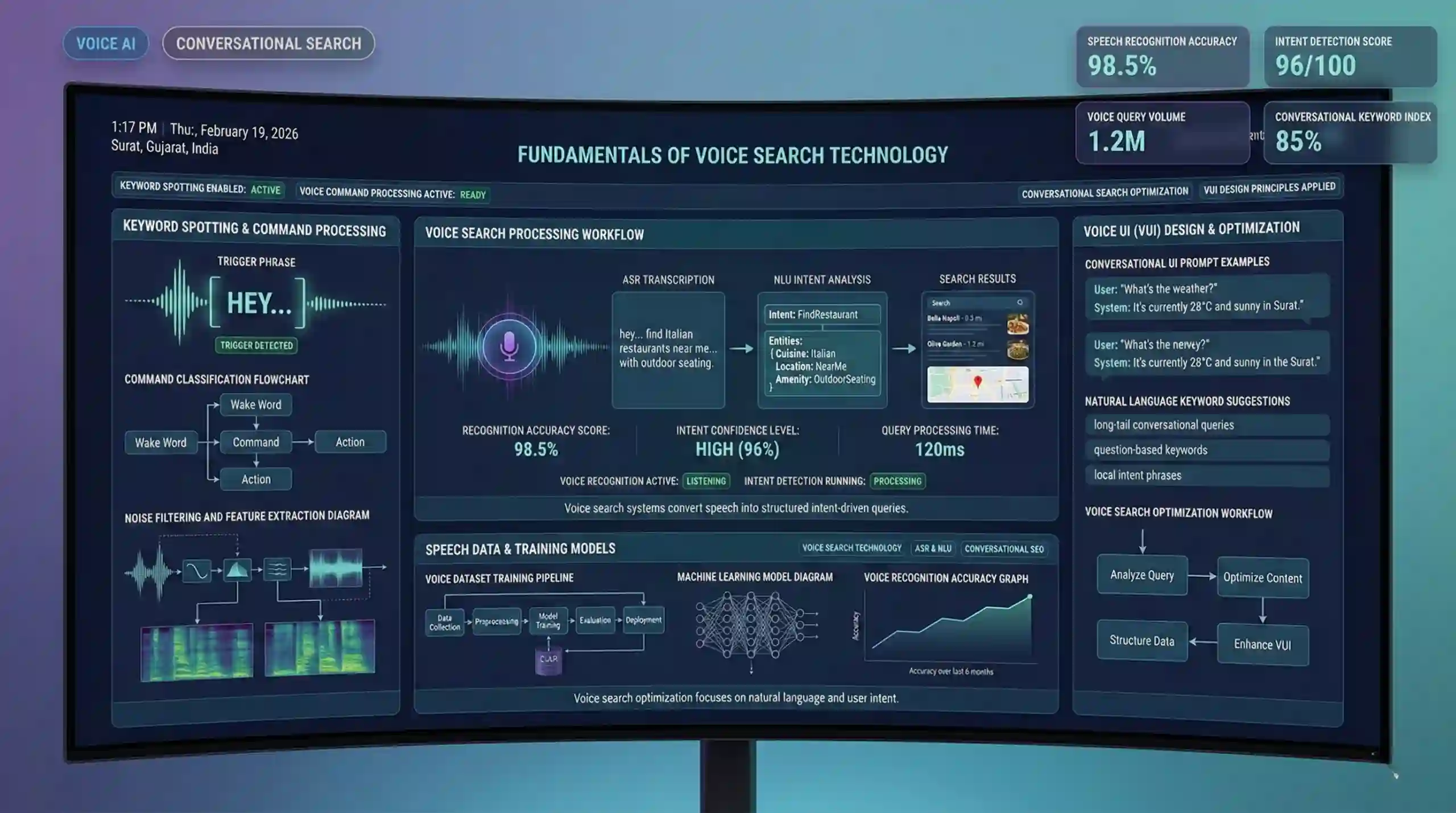

2. Fundamentals of Voice Search Technology

Voice Search System Architecture

Voice search systems comprise multiple layers, chiefly Automatic Speech Recognition (ASR) and Natural Language Understanding (NLU). ASR transcribes speech into text, which NLU then interprets to grasp user intent. For example, saying “Find nearby coffee shops” generates a textual intent that the system processes to provide relevant results.

Keyword Spotting & Intent Detection

Keyword spotting involves detecting trigger phrases like “Hey Google,” initiating voice commands. Intent detection interprets the user’s purpose — for example, understanding “Set an alarm for 7 AM” as an alarm-setting intent.

Design Principles for Voice User Interface (VUI)

Designing effective voice interfaces hinges on clarity and simplicity. For example, prompts like “Would you like to set an alarm for tomorrow?” guide users effectively. Good VUI design minimizes confusion and supports natural conversational flow.

Speech Datasets & Voice Command Processing

Datasets like Google Speech Commands help train models to recognize predefined voice cues. Processing voice commands often involves noise filtering, feature extraction, and classification algorithms to ensure accurate recognition.

Voice Search Optimization

Companies optimize voice search for mobile devices and smart speakers by focusing on conversational queries. SEO strategies now include natural language keywords (“What’s the weather like today?”), which influence search rankings.

Resources for Beginners

- Google developer documentation on Voice Search APIs

- Udemy tutorials on building voice-activated apps

- Industry case studies from Amazon Alexa and Google Assistant

3. Development of AI Chatbots

Chatbot Architecture: Rule-Based vs. AI-Powered

Rule-based chatbots rely on predefined scripts and fixed responses, suitable for straightforward tasks. AI-powered chatbots utilize machine learning, enabling understanding of varied queries and dynamic response generation. For example, rule-based bots can handle FAQs like “What are your hours?” while AI chatbots can process complex, unpredictable inputs.

Core Functions of Chatbots

- Intent Recognition: Determines what the user wants, such as “Book a flight” or “Check my account.”

- Entity Extraction: Identifies relevant details within the query, like dates or locations, e.g., extracting “New York” from “Find flights to New York next week.”

- Response Generation: Creates appropriate replies based on intent and context, adapting dynamically.

Popular Chatbot Platforms and Tools

- Dialogflow: Google’s NLP platform for designing conversational agents

- Microsoft Bot Framework: Supports deploying across multiple channels

- Rasa: Open-source framework for highly customizable bots

- IBM Watson Assistant: Enterprise-grade chatbot solution

Handling Multi-turn & Personalized Conversations

Advanced chatbots track dialogue context to engage in multi-turn interactions, such as booking an appointment and recalling user preferences for future interactions.

Practical Building & Deployment

Beginners can start by creating simple chatbots using platforms like Dialogflow, integrating responses with messaging apps such as Facebook Messenger or Slack.

Resources for Development

- Official platform guides (Google Dialogflow, Rasa)

- Educational channels on YouTube

- Developer forums like Stack Overflow and GitHub repositories

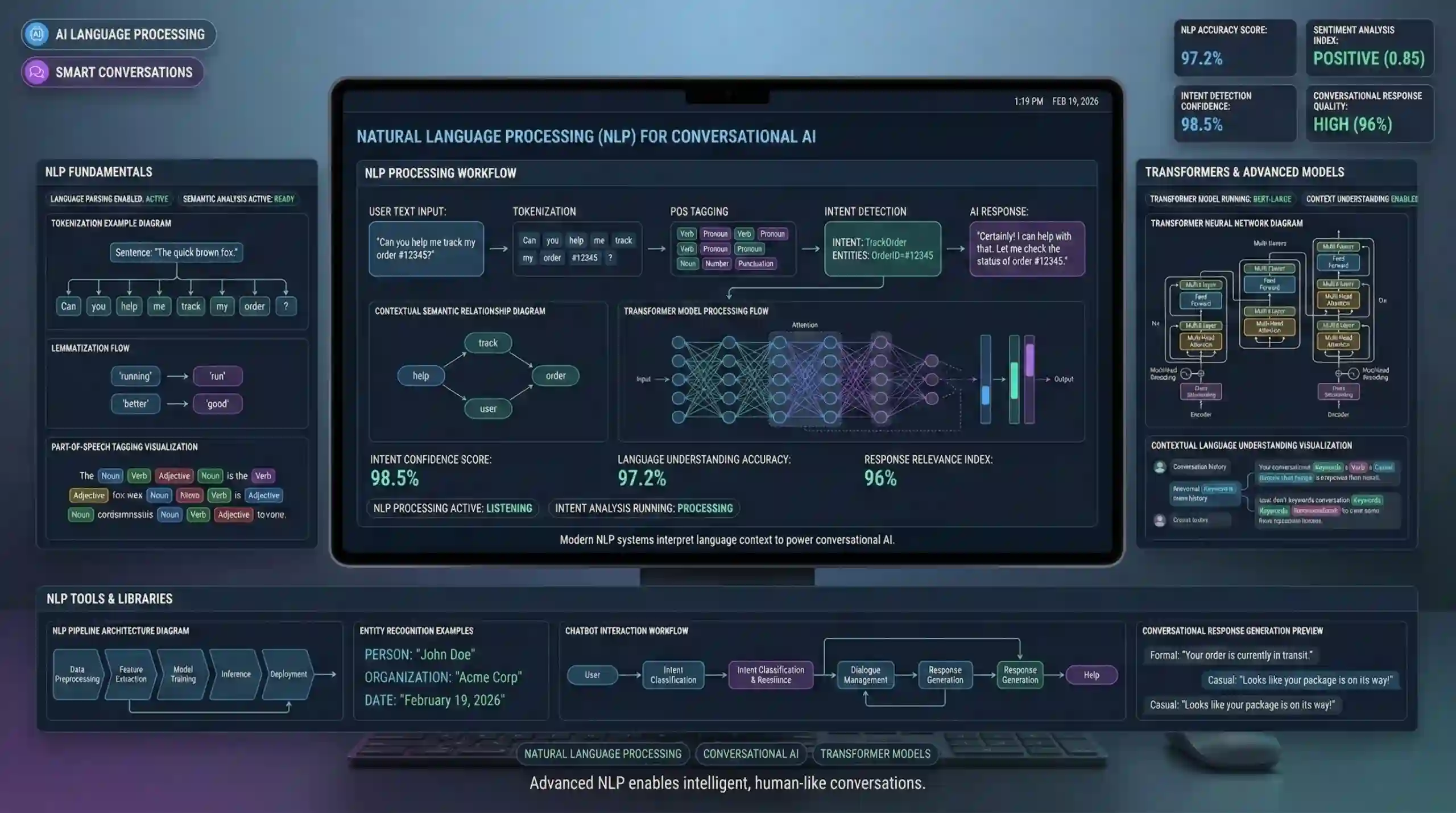

4. Natural Language Processing (NLP) for Conversational AI

NLP Fundamentals

- Tokenization: Dividing sentences into words or phrases, e.g., “Book a flight” into [“Book”, “a”, “flight”].

- Lemmatization: Reducing words to root forms, “running” to “run,” to unify processing.

- Sentiment Analysis: Determining emotional tone—happy, angry, neutral—from user input.

- Part-of-Speech Tagging: Identifying grammatical roles—noun, verb, adjective—in sentences.

Transformers & Modern Language Models

Transformers like BERT and GPT have revolutionized NLP by enabling models to understand context over long sequences, resulting in more human-like language understanding and generation.

Enhancing Intent Understanding with NLP Algorithms

Using NLP techniques, systems can handle ambiguous queries and recognize nuanced user intents, improving chatbot responsiveness in complex scenarios, such as customer complaints or booking requests.

Key NLP Libraries & Tools

- NLTK: For foundational NLP tasks in Python

- spaCy: Fast processing and easy to use for name entity recognition

- Hugging Face Transformers: Access to state-of-the-art models like GPT, BERT

Resources for Beginners

- Medium tutorials on NLP fundamentals

- Coursera’s “Natural Language Processing” course by DeepLearning.AI

5. Machine Learning & Deep Learning in Conversational AI

Core Algorithms

Algorithms such as neural networks, support vector machines, and decision trees underpin intent classification and speech recognition tasks. Neural networks are particularly effective for sequential data.

Supervised vs. Unsupervised Learning

Supervised learning uses labeled data to train models—for example, training on examples of user queries with annotated intents. Unsupervised learning finds patterns in unlabeled data, useful for clustering similar user inputs.

Deep Learning Techniques

- Neural Networks: The fundamental architecture for many AI models

- Recurrent Neural Networks (RNNs) and Long Short-Term Memory (LSTM) models excel in handling speech and language sequences

- Transformers: Handle long-range dependencies in language understanding, leading to better conversational AI performance

Training, Validation, Deployment

A typical workflow involves collecting data, training models, evaluating their accuracy using validation datasets, and deploying for real-world use with scalable infrastructure.

Study Resources

- Andrew Ng’s “Machine Learning” course on Coursera

- “Deep Learning Specialization” from DeepLearning.AI

- Fast.ai tutorials

6. Dataset and Data Collection for Voice and Chat Applications

Public Datasets

- LibriSpeech: Large corpus for speech recognition

- Mozilla Common Voice: Large open-source voice dataset for multilingual training

- SNIPS Dataset: For intent classification tasks

Data Preprocessing & Augmentation

Normalization, noise addition, and data balancing improve model robustness. Annotating datasets accurately is critical for training effective NLP and speech models.

Ethical Data Collection & Privacy

Ensuring user consent, anonymization of data, and reducing bias are imperative to ethical AI development. Implementation of data security policies is essential.

7. Deployment & Optimization

Cloud Platforms

- Amazon Web Services (AWS) with Lex and SageMaker

- Google Cloud with Dialogflow and Vertex AI

- Microsoft Azure with Azure Bot Service

API & Dialogue Management

Designing APIs for backend integration and managing dialogue state improves user experience.

Analytics & Continuous Improvement

Monitoring interactions, analyzing user feedback, and updating models ensure the AI system improves over time, increasing accuracy and engagement.

Techniques for Optimization

Personalization, adaptive responses, proactive suggestions, and robust error handling sustain high user satisfaction.

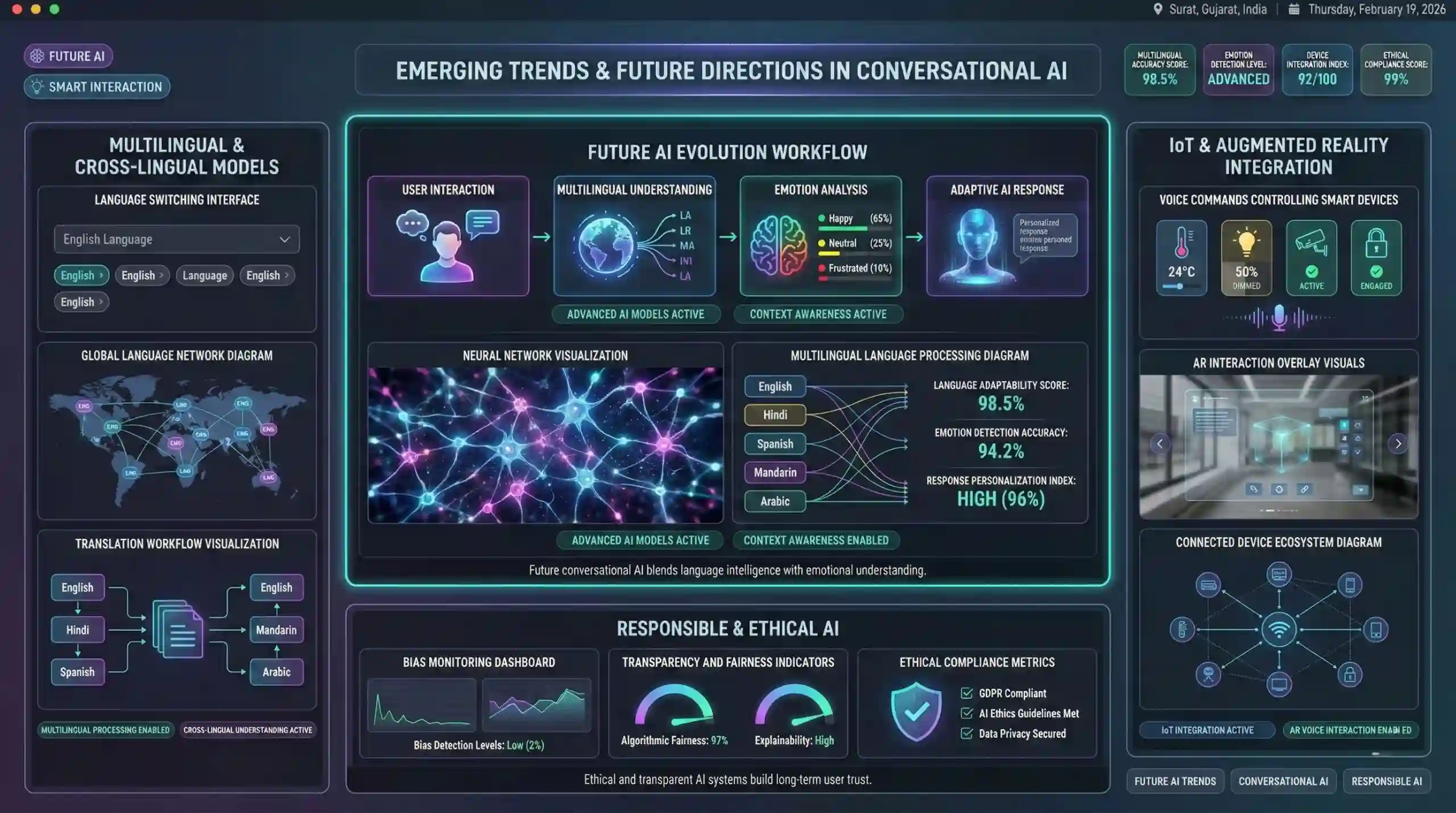

8. Emerging Trends & Future Directions

Multilingual & Cross-Lingual Models

Developing AI capable of understanding and responding across languages broadens accessibility and user base.

Emotion & Sentiment Recognition

Incorporating emotion detection enhances empathetic responses, fostering trust.

Integration with IoT & Augmented Reality

Voice commands within IoT devices and AR environments signal the expanding reach of conversational AI.

Responsible & Ethical AI

Transparency, fairness, and bias mitigation remain vital for future AI systems, ensuring societal trust.

Resources & Study Links

More Courses

- Social Media Marketing course with Gen AI

- Advance Digital Marketing & SEO course with Gen AI

- Advanced Certificate in Digital Design & Marketing course with Gen AI

- Meta & Google Ads course with Gen AI