Vectorization for Efficient Numeric Computations in Data Science

Definition and Importance of Vectorization in Data Science

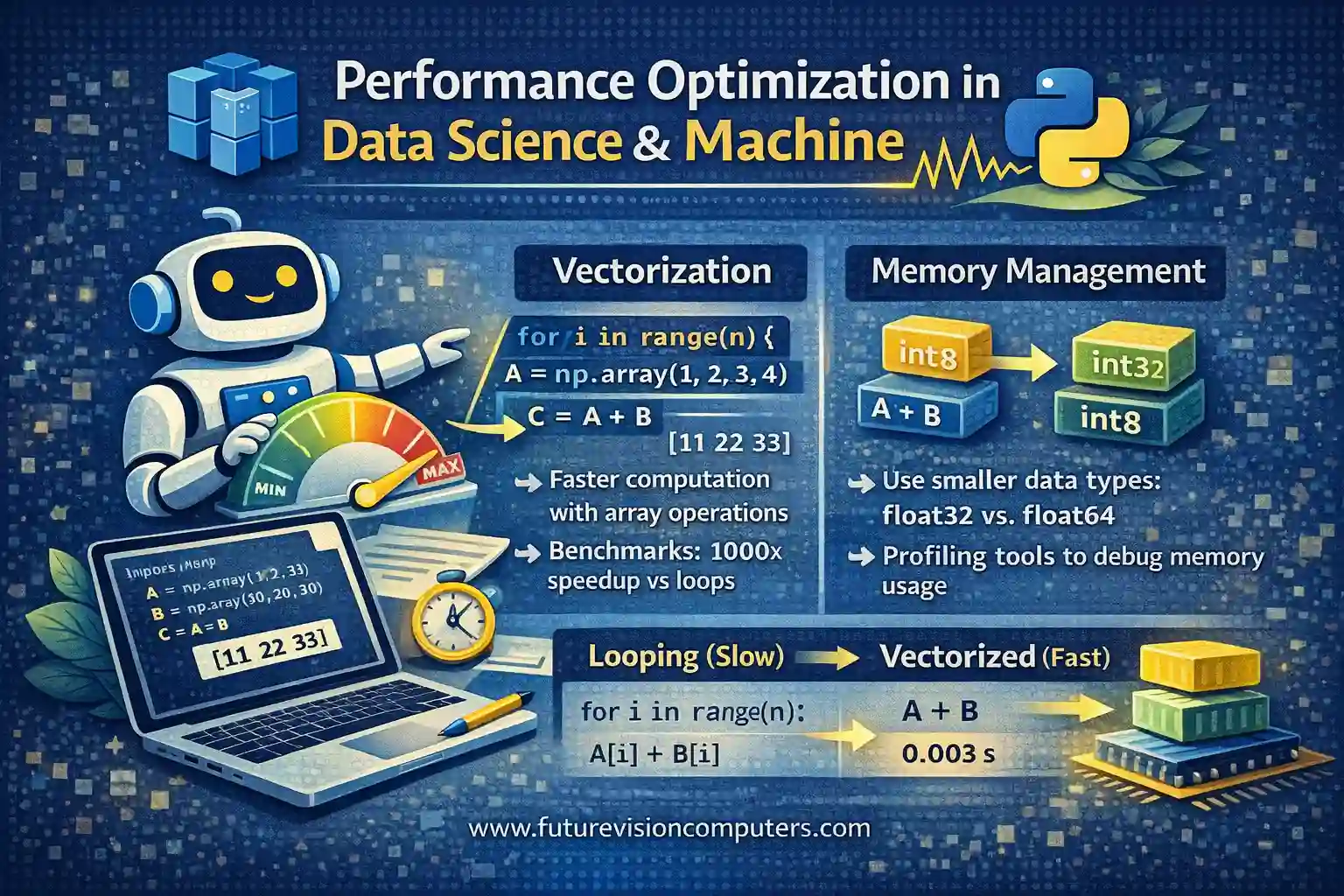

Vectorization refers to the process of replacing explicit iterative loops with array or matrix operations that leverage optimized low-level linear algebra libraries (such as BLAS or LAPACK). In data science, vectorization allows performing element-wise or matrix operations on entire datasets simultaneously, significantly accelerating computation time. Instead of iterating through data points with for-loops, vectorized operations use highly optimized linear algebra routines, leading to more efficient utilization of hardware resources such as CPUs and GPUs.

Real-world example:

Suppose we have two large arrays, A and B, each containing millions of elements representing feature vectors. Calculating their element-wise sum using loops would be slow, whereas using vectorized addition (A + B) accomplishes the task in a fraction of the time, thanks to optimized libraries.

Benefits of Vectorization Over Traditional Looping in Machine Learning Algorithms

Vectorized operations provide numerous advantages over traditional loop-based computations. The primary benefit is speed: vectorized computations harness low-level hardware acceleration and parallelism, leading to faster execution, especially noticeable with large datasets during model training or inference. Additionally, vectorization simplifies code, making it more concise and reducing potential for bugs associated with manual loops.

Practical implication:

Training a linear regression model on a dataset with one million samples can be achieved faster with vectorized matrix operations than with nested Python loops, reducing training time from minutes to seconds.

Implementing Vectorized Operations Using NumPy and Pandas for Data Analytics Optimization

NumPy and Pandas are core Python libraries for data analysis that support vectorized computations.

- With NumPy, array operations such as addition, subtraction, multiplication, and dot products are inherently vectorized.

- Pandas operations on Series and DataFrame objects utilize vectorization to perform statistical summaries, data manipulations, and feature engineering efficiently.

Example:

import numpy as np

import pandas as pd

# NumPy vectorized addition

A = np.array([1, 2, 3, 4])

B = np.array([10, 20, 30, 40])

C = A + B # Element-wise addition

print(C) # Output: [11 22 33 44]

# Pandas vectorized operation

df = pd.DataFrame({'A': [1, 2, 3], 'B': [4, 5, 6]})

df['A_plus_B'] = df['A'] + df['B']

print(df)

Comparing Looping vs Vectorized Operations in Data Processing and Machine Learning

Performance Benchmarks: Looping Constructs vs Vectorized Functions

Empirical performance assessment clearly demonstrates that vectorized operations drastically outperform loops in large data scenarios. Benchmarks reveal that, for datasets with millions of elements, vectorized NumPy operations can be up to 1000 times faster than explicit Python loops. For example, element-wise addition of two arrays of size 1 million illustrates this speed gap.

Sample benchmark:

import numpy as np

import time

size = 10**6

A = np.random.rand(size)

B = np.random.rand(size)

# Loop method

start_time = time.time()

result_loop = np.empty(size)

for i in range(size):

result_loop[i] = A[i] + B[i]

loop_time = time.time() - start_time

# Vectorized method

start_time = time.time()

result_vectorized = A + B

vectorized_time = time.time() - start_time

print(f"Loop time: {loop_time:.4f}s")

print(f"Vectorized time: {vectorized_time:.4f}s")

Trade-offs in Readability, Maintainability, and Execution Speed

While vectorization boosts performance, it can sometimes obscure logic, especially to beginners unfamiliar with linear algebra concepts, potentially impacting code readability. Maintaining code that heavily relies on complex vectorized expressions may require careful documentation. However, in the long term, vectorized code is generally easier to maintain due to reduced code length and complexity.

Best Practices for Transitioning from Loops to Vectorized Code in Data Pipelines

- Identify bottlenecks: Profile code to locate slow loops or processing steps.

- Learn NumPy and Pandas functions: Many operations have vectorized equivalents.

- Replace explicit loops: Use NumPy array operations or Pandas vectorized functions.

- Validate results: Ensure correctness after refactoring.

- Document changes: Clarify the logic for future reference.

Example:

Transform nested loops for feature scaling into vectorized operations to improve efficiency.

Memory Management and Data Types Optimization in Data Science

Optimizing Memory Usage with Data Types in Large-Scale Data Handling

Choosing appropriate data types can substantially reduce memory consumption when handling big datasets. For example, using float32 instead of float64 halves the memory footprint of floating-point data, with minimal precision loss in many applications. Similarly, replacing large integer types with smaller alternatives (int8, int16) improves efficiency.

Practical insight:

In a dataset with millions of samples, switching from float64 to float32 can save gigabytes of memory, enabling processing on machines with limited resources.

Memory Profiling and Debugging for Performance Improvement

Tools such as memory_profiler and pandas’ info() method help identify memory bottlenecks within data pipelines. Profiling reveals large intermediate DataFrames or arrays that can be optimized or discarded promptly. Debugging memory issues ensures models are trained efficiently without exceeding available resources, especially in constrained environments.

Example:

import pandas as pd

df = pd.read_csv('large_dataset.csv')

print(df.info(memory_usage='deep'))

Techniques for Managing Memory and Improving Computational Efficiency in Machine Learning Models

- Chunking: Process data in smaller parts rather than loading entire datasets into memory.

- Data Batching: During model training, process data in batches to manage memory.

- Sparse Data Structures: Use sparse matrices for data with many zeros, reducing memory load.

- Data Type Optimization: Convert data to minimal appropriate data types.

- Model Simplification: Use simpler models or feature selection to reduce computational complexity.

Summary

This study material emphasizes the core techniques for performance optimization in data science and machine learning, focusing on using vectorized operations for efficient numeric computations and advocating best practices for transitioning from loops. Additionally, it highlights the importance of effective memory management through data type optimization and profiling. Implementing these strategies leads to significant reductions in computational time and resource consumption, enabling scalable and efficient data workflows.

Practice Questions

-

Explain the concept of vectorization and how it differs from traditional looping in data processing.

Answer: Vectorization replaces explicit iterative loops with array or matrix operations that utilize optimized low-level libraries, achieving faster computations. Unlike loops that process elements sequentially, vectorized operations perform batch computations, leveraging hardware acceleration. -

Convert the following loop into a vectorized NumPy operation:

Answer:result = [] for i in range(len(A)): result.append(A[i] * 2)

result = A * 2 -

Why is using vectorized operations beneficial for training machine learning models on large datasets?

Answer: They significantly accelerate computations, reduce execution time, and improve efficiency by utilizing hardware parallelism, which is critical for handling big data. -

Write a Python code snippet that benchmarks loop-based addition versus vectorized addition for arrays of size 1 million.

Answer: (Refer to the benchmark code in “Performance Benchmarks” section above) -

Discuss potential drawbacks of relying solely on vectorization in code.

Answer: Overuse can reduce code readability, obscure complex logic, and sometimes make debugging more challenging, especially for those unfamiliar with linear algebra concepts. -

How can choosing proper data types reduce memory usage during large data processing?

Answer: Smaller data types (e.g.,float32instead offloat64) consume less memory, enabling processing of larger datasets within limited hardware resources with minimal loss of precision. -

Demonstrate how to profile memory usage of a pandas DataFrame.

Answer:

import pandas as pd df = pd.read_csv('large_dataset.csv') print(df.info(memory_usage='deep')) -

Provide an example of a technique to handle big data during machine learning model training to prevent memory overload.

Answer: Process data in chunks or batches, such as using Pandasread_csvwithchunksize, or training models on smaller subsets sequentially. -

What are sparse data structures, and when should they be used?

Answer: Sparse structures efficiently store data with many zeros by only recording nonzero elements, useful in domains like natural language processing or recommender systems to save memory. -

Summarize the importance of combining vectorization with memory optimization strategies in data science workflows.

Answer: Combining these techniques ensures fast computations while managing resources effectively, enabling scalable, efficient processing of large datasets and complex models.

Study Resources

This concludes the structured, theoretical exploration of performance optimization techniques in data science and machine learning, emphasizing vectorization methods, benchmarking, and memory management strategies for effective large-scale data processing.

More Courses

- Advanced Data Analytics with Gen AI

- Data Science & AI Course

- Advanced Certificate in Python Development & Generative AI

- Advance Python Programming with Gen AI