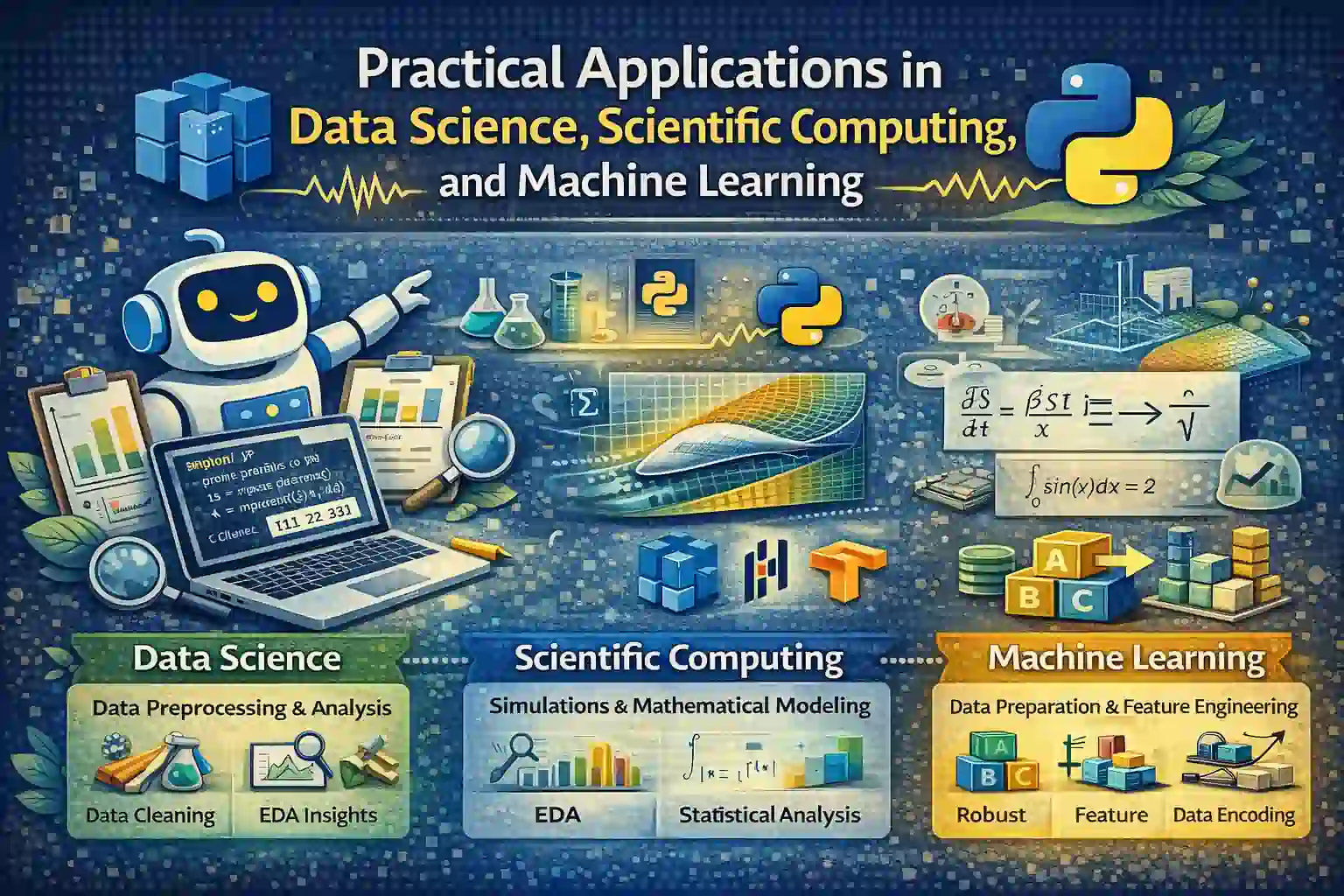

This study material provides a comprehensive overview of how theoretical principles underpin practical applications in data science, scientific computing, and machine learning. It emphasizes concepts essential for transforming raw data and mathematical models into actionable insights, reliable simulations, and optimized machine learning systems.

1. Data Science: Data Preprocessing and Analysis

Data Cleaning and Data Wrangling with Data Science Tools

Concepts:

Data cleaning involves identifying and correcting errors or inconsistencies in datasets to ensure accuracy. Data wrangling refers to transforming raw data into a structured format suitable for analysis. Techniques include handling missing values, removing duplicates, correcting inconsistent entries, and addressing noise and outliers.

Handling missing data, for example, can involve imputation techniques (mean, median, mode) or removal if data points are negligible. Outliers—extreme data points deviating significantly from other observations—can distort analysis; applying statistical tests or visualization helps detect and handle them.

Tools:

Python libraries like Pandas and NumPy facilitate efficient data manipulation. Pandas DataFrames support data cleaning functions such as .fillna(), .dropna(), and .replace().

Example:

import pandas as pd

import numpy as np

df = pd.DataFrame({'A': [1, 2, np.nan, 4, 100], 'B': ['x', 'y', 'y', None, 'z']})

# Handle missing values

df['A'].fillna(df['A'].mean(), inplace=True) # Replace NaN with mean

df['B'].fillna('unknown', inplace=True) # Fill missing categorical data

print(df)Outcome:

The missing value in column ‘A’ is replaced with the mean, and missing ‘B’ entries are filled appropriately.

Exploratory Data Analysis (EDA) for Business Insights

Concepts:

EDA involves summarizing data sets visually and statistically to discover patterns, relationships, and anomalies. Visualization tools such as Matplotlib and Seaborn are essential for plotting histograms, scatter plots, and heatmaps.

Purpose:

Identify trends, correlations, and outliers that influence decision-making. For example, a sales dataset may reveal seasonal effects or product performance correlations.

Example:

import seaborn as sns

import matplotlib.pyplot as plt

# Assuming df contains sales data with features 'Price' and 'Units Sold'

sns.scatterplot(x='Price', y='Units Sold', data=df)

plt.title('Price vs Units Sold')

plt.show()Outcome:

Visualization reveals the relationship between product price and sales volume, guiding pricing strategies.

Statistical Data Analysis in Data Science Workflows

Concepts:

Applying descriptive statistics (mean, median, variance) quantifies data characteristics. Inferential statistics, including hypothesis testing and regression analysis, enable insights about entire populations from samples.

Regression models, such as linear regression, predict dependent variables based on independent variables, facilitating forecasting. Hypothesis testing (e.g., t-tests) assesses the significance of observed differences.

Example:

import statsmodels.api as sm

X = df[['Price']]

Y = df['Units Sold']

X = sm.add_constant(X)

model = sm.OLS(Y, X).fit()

print(model.summary())Outcome:

Regression results quantify how price influences sales, providing statistical evidence for business decisions.

Data Transformation and Feature Scaling for Improved Data Modeling

Concepts:

Feature scaling ensures that distance-based algorithms (e.g., k-NN, SVM) perform optimally. Normalization rescales features to [0,1], while standardization centers data with a mean of zero and unit variance.

Encoding categorical variables (e.g., ‘Country’) into numerical forms—like One-Hot Encoding—enables algorithms that require numerical input.

Example:

from sklearn.preprocessing import StandardScaler, OneHotEncoder

scaler = StandardScaler()

scaled_features = scaler.fit_transform(df[['Price']])

encoder = OneHotEncoder(sparse=False)

category_encoded = encoder.fit_transform(df[['Country']])Outcome:

Features are transformed for model compatibility, boosting prediction accuracy.

2. Scientific Computing: Simulations and Mathematical Modeling

Numerical Methods for Scientific Computing Simulations

Concepts:

Numerical methods translate mathematical models into algorithms capable of approximating solutions for complex problems. Methods like finite difference approximate derivatives and finite element methods solve partial differential equations in physics and engineering.

Numerical integration (e.g., Simpson’s rule) estimates areas under curves, simulating physical phenomena like heat transfer or fluid flow.

Example:

import numpy as np

x = np.linspace(0, np.pi, 100)

integral = np.trapz(np.sin(x), x)

print(f"Numerical integral of sin(x) over [0, π]: {integral}")Outcome:

The approximate integral closely matches the analytical value \(2\), facilitating simulations of wave behavior, heat equations, etc.

Computational Physics and Engineering Simulations

Concepts:

Simulations model physical systems—such as fluid flow or thermodynamics—using numerical algorithms to analyze complex behaviors analytically intractable. High-precision calculations ensure reliability.

Application:

Modeling airflow over an aircraft wing involves solving Navier-Stokes equations using CFD (Computational Fluid Dynamics).

Mathematical Modeling of Real-World Systems

Concepts:

Mathematical modeling translates scientific phenomena into equations and algorithms for analysis and prediction. Differential equations describe dynamic systems; linear algebra handles systems with multiple dependencies.

Example:

Modeling the spread of an infectious disease uses the SIR (Susceptible-Infected-Recovered) model composed of differential equations.

Visualization of Scientific Data for Better Interpretation

Concepts:

Visual tools like Matplotlib, Plotly, or ParaView enable scientists to interpret complex simulation data through 2D/3D plots. Effective visualization uncovers insights about system behaviors.

3D visualization of flow in fluid dynamics helps identify turbulence regions or boundary layers.

3. Machine Learning: Data Preparation and Feature Engineering

Data Cleaning and Outlier Removal for Robust Models

Concepts:

Quality data is critical for machine learning accuracy. Outliers—data points significantly different from others—can bias models. Techniques such as the Interquartile Range (IQR) or Z-score identify anomalies.

Example:

from scipy import stats

z_scores = np.abs(stats.zscore(df['Units Sold']))

df_clean = df[z_scores < 3] # Removing outliersOutcome:

Cleaning improves model robustness and prevents overfitting.

Feature Extraction and Feature Selection

Concepts:

Feature engineering transforms raw data into meaningful features, which helps models learn more effectively. Dimensionality reduction techniques like PCA (Principal Component Analysis) and feature selection methods reduce noise and redundancy, improving computational efficiency.

Example:

from sklearn.decomposition import PCA

pca = PCA(n_components=2)

principal_components = pca.fit_transform(df.values)Outcome:

Reduced feature space with preserved informative content, leading to better model performance.

Encoding Categorical Data for Machine Learning Algorithms

Concepts:

Transform categorical data into numerical formats—One-Hot Encoding creates binary columns, Label Encoding assigns ordinal numbers—ensuring compatibility with algorithms like decision trees and logistic regression.

Example:

from sklearn.preprocessing import LabelEncoder

le = LabelEncoder()

df['Country_Encoded'] = le.fit_transform(df['Country'])Outcome:

Proper encoding enhances the learning process and accuracy of classification algorithms.

Data Augmentation and Resampling for Class Imbalance

Concepts:

Handling imbalanced datasets involves techniques like oversampling minority classes, undersampling majority ones, or generating synthetic data (SMOTE) to improve model generalization.

Example:

from imblearn.over_sampling import SMOTE

smote = SMOTE()

X_resampled, y_resampled = smote.fit_resample(X, y)Outcome:

Balanced data prevents biased predictions, enabling the model to learn equally from all classes.

Resources for Further Study

This structured overview bridges foundational theory and practical application, equipping students with the knowledge to leverage data science, scientific computing, and machine learning techniques effectively across various industries.

More Courses

- Advanced Data Analytics with Gen AI

- Data Science & AI Course

- Advanced Certificate in Python Development & Generative AI

- Advance Python Programming with Gen AI